Event stream

Every workflow run emits a sequence of canonical run event envelopes that are:- Stored durably in the run store

- Broadcast over SSE to connected API clients

- Stored for later analysis and retro generation

- Rendered by CLI progress and log tooling

- Optionally materialized into JSONL by export/debug paths

Event names

Event names use lowercase dot notation, for example:run.startedstage.startedstage.completedagent.tool.startedagent.tool.completedsandbox.readyparallel.branch.completed

Envelope format

Each serialized event envelope has a stable JSON shape:| Field | Description |

|---|---|

id | Unique event id |

ts | UTC timestamp |

run_id | Workflow run id |

event | Event name |

session_id | Session that emitted the event, when applicable |

parent_session_id | Immediate parent session for forwarded child events |

node_id | Node or branch id, when applicable |

node_label | Human-facing label for node_id, when applicable |

properties | Event-specific payload |

id, ts, run_id, and event are always present. Optional fields are omitted when they do not apply.

Reading the event stream

Because event payload lives inproperties, most shell queries should look there.

fabro dump exports events.jsonl plus run-state projections.

Event categories

Common categories include:| Category | Example events |

|---|---|

| Run lifecycle | run.started, run.completed, run.failed, run.notice |

| Stage lifecycle | stage.started, stage.completed, stage.failed, stage.retrying |

| Agent activity | agent.message, agent.tool.started, agent.warning, agent.sub.spawned |

| Routing | edge.selected, loop.restart, parallel.started |

| Git and checkpoints | checkpoint.completed, git.commit, git.push |

| Setup and sandbox | sandbox.initializing, sandbox.ready, setup.started |

| Retro | retro.started, retro.completed, retro.failed |

Sub-agent visibility

Sub-agent activity now appears as normal agent events with session linkage:session_ididentifies the child sessionparent_session_ididentifies its immediate parent

agent.sub.spawned and agent.sub.completed are emitted by the parent session. Tool calls and other child activity are forwarded with their original session_id.

Real-time monitoring

API: Server-Sent Events

When running workflows through the API server, subscribe to the run events endpoint. Each SSE payload is a serialized run event envelope in the same shape used byfabro logs and events.jsonl exports.

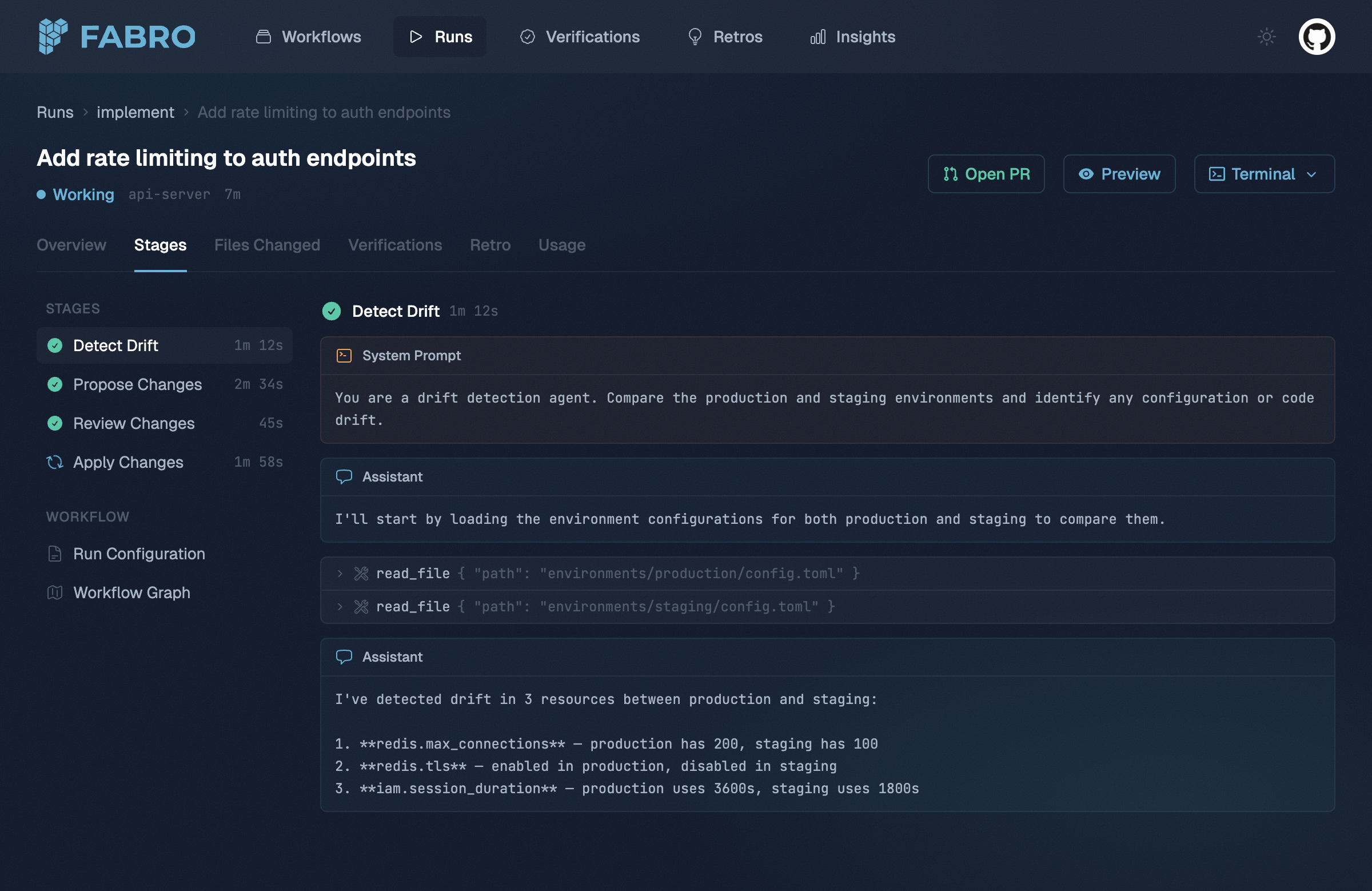

Web UI

The web frontend consumes the SSE stream automatically and shows stage progress, tool calls, and human interaction as they happen.

CLI progress

The CLI renders live progress from the same envelope format. This is written to stderr so stdout remains pipe-friendly.Post-run analysis

Post-run analysis surfaces include:| Surface | Description |

|---|---|

fabro logs <RUN> | Full event envelope stream as NDJSON |

fabro inspect <RUN> | Current durable run state, including run/start/checkpoint/conclusion records |

fabro dump --output <DIR> <RUN> | Exported events.jsonl plus reconstructed JSON and node files |